The LIMRA (Life Insurance Marketing and Research Association) 2025 Workplace Benefits Conference takes place April 23-25, 2025, at the Encore Boston Harbor in Boston, MA. With this year’s theme, Pathways to Growth, industry leaders and participants will examine the business and technology trends affecting the North American workplace benefits market. Speakers and attendees, primarily carriers and brokers of life, health, and related insurance coverage, and providers of employee benefits and technology solutions, will explore strategies for growth while addressing shifting consumer needs, the latest digital tools and technologies, and an increasing focus on innovation, outcomes and collaboration.

Venkat Laksh, Iris’ Global Lead – Insurance, and Senior Client Partner, will attend this forum. Iris provides leading insurance companies with advanced InsurTech services and solutions, including Software and Quality Engineering, AI/ML/Generative AI, Application Modernization, Automation, Cloud, Data Science, Enterprise Analytics and Integrations. These companies are applying next-generation, emerging technology through Iris to ensure their enterprises are future-ready, scalable, secure, cost-efficient, and compliant.

Talk about your digital priorities with Venkat at the LIMRA 2025 Workplace Benefits Conference and connect with our InsurTech team anytime to advance your digital transformation goals: Insurance Technology Services | Iris Software.

Contact

Our experts can help you find the right solutions to meet your needs.

Get in touchEnterprise-Grade DevOps for Scalable Blockchain DLT

Client

A leading provider of real-world asset (RWA) tokenization, digital currency, and interoperability solutions to the world’s largest financial players

Goal

To optimize blockchain DLT platforms for scalability, resilience, and seamless operations through enterprise-grade DevOps and SRE practices

Tools and Technologies

R3 Corda 5, Azure, AWS, G42, Docker, Kubernetes, Helm Charts, Terraform, Ansible, GitHub Actions, Azure DevOps, Jenkins, Prometheus, Grafana, Slack Integration

Business Challenge

Complex Multi-Node Deployments require a mechanism to upgrade CorDapps, notaries, and workers across network participants without downtime or compatibility issues. Meanwhile, security and compliance risks demand strict access controls, network segmentation, and security hardening to protect Corda nodes and ledger operations.

For infrastructure scalability and automation, an efficient approach was required for onboarding new participants and managing network topology across cloud environments. The lack of a Real-Time Monitoring system necessitated the detection of transaction failures, tracking node health, and providing proactive alerts.

To address security vulnerabilities, continuous scanning and security enforcement across CorDapps, containerized nodes, and CI/CD pipelines were required.

Solution

- Enabled zero-downtime CorDapp deployments with automated rollback and stateful upgrades, ensuring stability and ledger integrity

- Secured Corda nodes with RBAC, network segmentation, and security hardening, while optimizing autoscaling for dynamic ledger workloads

- Automated Corda network topology and participant onboarding using modular Terraform & Ansible configurations, ensuring scalability and repeatability

- Implemented real-time monitoring with Prometheus & Grafana, with Slack-based alerts for transaction failures and node health anomalies

- Ensured high availability and auto-healing for Corda network nodes and ledger operations

- Integrated DevSecOps with automated vulnerability scanning for CorDapps, containerized nodes, and CI/CD pipelines

Outcomes

- Accelerated blockchain deployment cycles through CI/CD automation increased deployment frequency by 40% and reduced failures by 60%

- Optimized kubernetes workloads for Corda 5 through efficient resource management resulted in 15% cost savings and improved ledger performance

- Scalable & secure blockchain infrastructure reduced manual intervention by 30%, enabling seamless scaling of Corda network participants

- Proactive incident management for DLT networks through real-time monitoring cut response time by 50% for blockchain issues, ensuring high availability

- Automated workflows accelerated development cycles by 25%, enhancing collaboration across blockchain and DevOps teams

Our experts can help you find the right solutions to meet your needs.

Streamlining policy administration with low-code development

Client

A leading home insurance enterprise specializing in policy administration solutions

Goal

Develop policy administration applications for partner insurance carriers with seamless third-party and home-grown app integration

Tools and Technologies

Low-Code/No-Code Platform, AWS, Agile Development, Containerization

Business Challenge

The client needed a scalable policy administration solution to support insurance carriers, integrate third-party applications, and enhance policy issuance and settlement.

The goal was to accelerate the go-to-market process for partners and agents while delivering premier services for competitive pricing and superior policy coverage.

Solution

- Designed and developed a policy administration platform using a Low-Code/No-Code application development platform on AWS

- Built three Agile Pod-based teams, each containing five members for rapid iteration and development

- Leveraged containerized development, ensuring each service had its own lifecycle for enhanced flexibility and scalability

- Established weekly release plans with feature flags to enable controlled functionality deployment in production once the business was ready to adopt

Outcomes

- 200% growth in policy issuance

- Faster onboarding for agencies and agents

- Improved efficiency and scalability in policy administration

- Accelerated go-to-market for insurance partners and agents

Our experts can help you find the right solutions to meet your needs.

InsurTech NY 2025 Spring Conference

The annual InsurTech NY Spring Conference is April 2-3, 2025, at Chelsea Piers in New York City. The theme of this year’s forum is InsurTech is the New R&D. The event brings together 900+ leaders and innovators in the industry, including carriers and brokers of life, health and disability, property and casualty (P&C), and specialty insurance; investors; and insurtech service providers like Iris Software. Each year, speakers and attendees focus on the technology and business management solutions that will enhance the operations, customer experience, and revenue streams of insurers.

Meet the leaders of our global InsurTech team - Ravi Chodagam, Vice President, Venkat Laksh and Abhineet Jha, Senior Client Partners - at InsurTech NY’s 2025 Spring Conference. As an integral and long-time technology partner to many top life, P&C, and specialty insurers, Iris has vast experience implementing agile and advanced technology and data solutions that ensure clients stay competitive and ahead of rapidly evolving trends in the dynamic insurance industry.

Discuss your tech priorities with Ravi, Venkat and Abhineet at the Spring Conference, or anytime, and learn how leading insurers apply our solutions in AI and Generative AI, Application Modernization, Automation, Cloud, and Data Science & Analytics to ensure their enterprises are future-ready, scalable, secure, cost-efficient, and compliant. You can also contact the team and learn more about our InsurTech Services here: Insurance Technology Services | Iris Software.

Contact

Our experts can help you find the right solutions to meet your needs.

Get in touchHome » Services » Automation » Page 2

How Gen AI Can Transform Software Engineering

Unlocking efficiency across the software development lifecycle, enabling faster delivery and higher quality outputs.

Generative AI has enormous potential for business use cases, and its application to software engineering is equally promising.

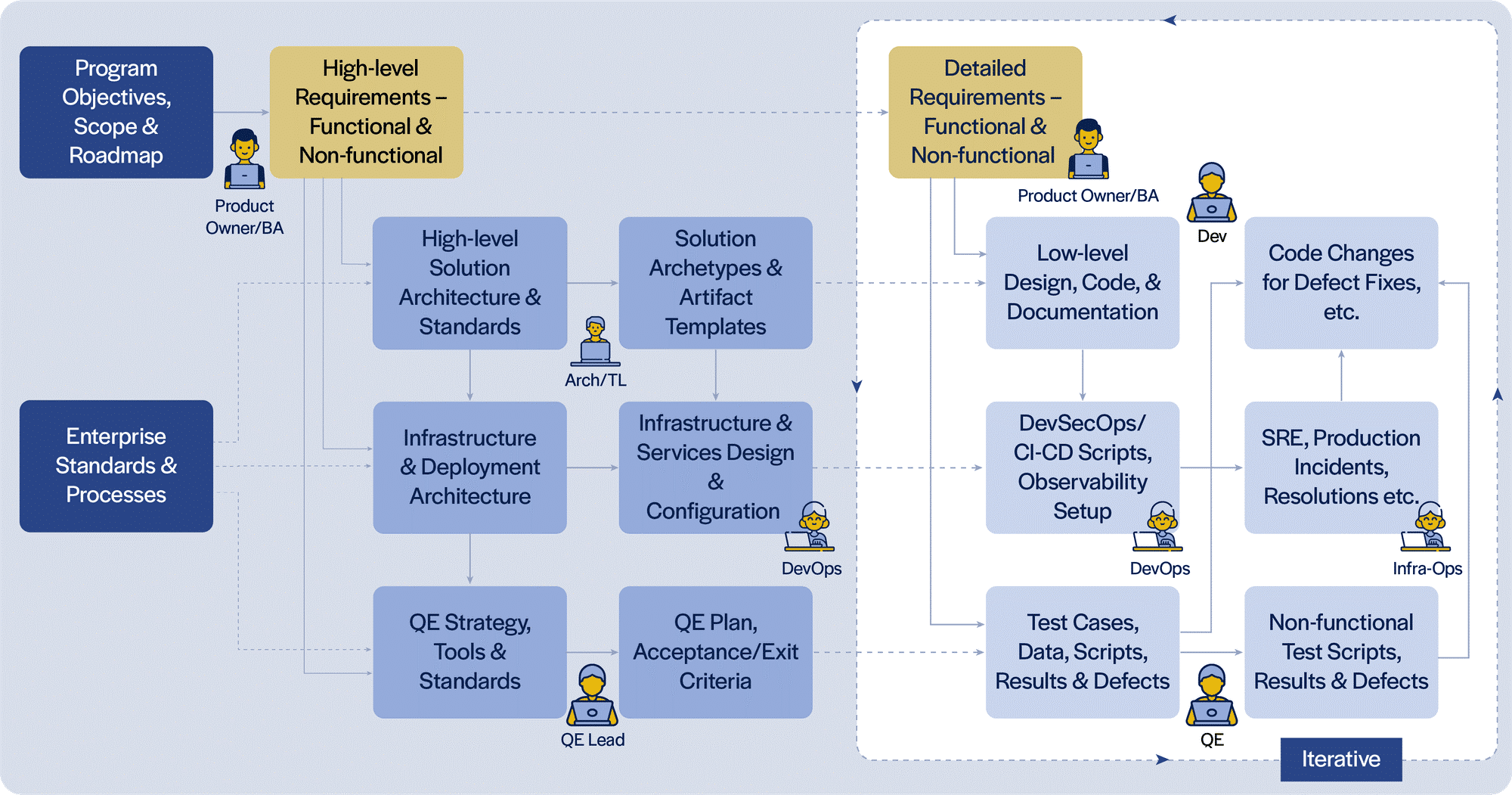

In our experience, development activities, including automated test and deployment scripts, account for only 30-50% of the time and effort spent across the software engineering lifecycle. Within that, only a fraction of the time and effort is spent in actual coding. Hence, to realize the true promise of Generative AI in software engineering, we need to look across the entire lifecycle.

A typical software engineering lifecycle involves a number of different personas (Product Owner, Business Analyst, Architect, Quality Assurance/ Tech Leads, Developer, Quality/ DevSecOps/ Platform Engineers), each using their own tools and producing a distinct set of artifacts. Integrating these different tools through a combination of Gen AI software engineering extensions and services will help streamline the flow of artifacts through the lifecycle, formalize the hand-off reviews, enable automated derivation of initial versions of related artifacts, etc.

As an art-of-the-possible exercise, we developed extensions (for VS Code IDE and Chrome Browser at this time) incorporating the above considerations. Our early experimentation suggests that Generative AI has the potential to enable more complete and consistent artifacts. This results in higher quality, productivity and agility, reducing churn and cycle time, across parts of the software engineering lifecycle that AI coding assistants do not currently address.

Complementary approaches to automate repetitive activities through smart templating, leveraging Generative AI and traditional artifact generation and completion techniques can help save time, let the team focus on higher-value activities and improve overall satisfaction. However, there are key considerations in order to do this at scale across many teams and team members. To enable teams to become high-performant, the Gen AI software engineering extensions and services need to provide capabilities around standardization and templatization of standard solution patterns (archetypes) and formalize the definition and automation of steps of doneness for each artifact type.

Read our perspective paper for more insights on How Gen AI Can Transform Software Engineering through streamlined processes, automated tasks, and augmented collaboration, bringing faster, higher-quality software delivery.

Contact

Our experts can help you find the right solutions to meet your needs.

Get in touchJoin us at Reuters Pharma USA 2025

Reuters Pharma USA 2025 Conference, noted as North America’s largest cross-functional pharmaceutical gathering, is scheduled between March 18-19, 2025, at the Pennsylvania Convention Center in Philadelphia. With the pharmaceutical industry continually pressured by shifts in market trends and participants, product development, consumer sentiment, and government regulations, attendees and speakers at this Conference will be seeking and sharing insights and strategies to best navigate change, remain competitive, and future-proof operational units as well as global enterprises.

Swarnendu Banerjee, client partner and seasoned IT professional for the pharmaceutical and life sciences sectors, will be attending the Reuters Pharma USA 2025 Conference. He will share Iris’ extensive experience in these domains. How our advanced capabilities in AI/Generative AI, Application Development, Intelligent Automation, Cloud, Data & Analytics, Integrations, and Quality Engineering have delivered successful outcomes in mission-critical engagements, ensuring quality and compliance, reducing costs, enhancing UX, modernizing, migrating, scaling, accelerating, and streamlining.

Connect with Swarnendu at the Reuters Pharma forum or anytime, or visit our Services and Life Sciences capabilities pages to explore our innovative approach and strategies for end-to-end digital transformation.

Contact

Our experts can help you find the right solutions to meet your needs.

Get in touchTransforming payment processing for banking channels

Client

A leading Australian bank

Goal

Streamline the payment transaction lifecycle to handle increasing volume and complexity

Tools and Technologies

Jenkins, Kubernetes, Spring, Oracle, PostgresSQL, AWS, Docker, Kafka, Java

Business Challenge

Multiple banking channels - mobile, internet and branch - initiate various payment requests (20+ types such as ACH, mandate and book transfer) that need to be processed. Depending on the payment type, the channel was required to invoke one or more services in a specific order as per the associated business rules.

Changes to these payment workflows stemming from introduction of new payment types or revisions of business rules introduced complex and repeated changes to the bank’s systems, hindering scalability.

Solution

- To support lifecycle management of various payment transactions, including defining different payment workflows, we designed an event-driven architecture comprised of 40+ microservices (e.g., limit, eligibility, and fraud checks, etc.) supported by a Kafka message queuing system

- The architecture involved building an orchestration engine (landing service), acting as a front controller for all payment workflow requests from the various banking channels, such as mobile, internet and branch

- The landing service in turn invokes the corresponding service (limit, eligibility, etc.) based on the payment type and business rules associated with it

- Data flow between these microservices (resulting from further invocations) and other downstream systems is facilitated asynchronously with the help of a distributed messaging system (Kafka)

- Using Jenkins, we built a CI/CD pipeline to streamline the workflow by automatically building, testing and deploying code changes as they are committed

Outcomes

- Significantly eased the management of payment workflows, including those related to the addition of new payment types (resulting from an acquisition)

- Enabled systems to scale without introducing complex changes at the channels

- Improved reporting, resulting from faster access to data through dedicated microservices

Our experts can help you find the right solutions to meet your needs.

Modernized Payments Hub Improves UX and Compliance

Client

U.S. operations of a leading Japanese bank

Goal

Modernize payments architecture to streamline processing and improve client experience

Tools and Technologies

Jenkins, Kafka, Spring, Oracle, JBoss, React, Elastic Search, Java, Node.js

Business Challenge

The evolving payments landscape, with the introduction of ISO 20022 and the dynamic nature of the regulatory environment, necessitated advancement in the bank’s payment processing capabilities.

The lack of a modern architecture hindered client experience, with multiple channels initiating various payment types that required complex processing.

Solution

Our team built a centralized payments hub to orchestrate data flows between payment initiation systems and product processors. The steps:

- Designed a flexible and scalable microservices-based architecture to facilitate translation, enrichment and processing of payment transactions

- Built a messaging layer to streamline data flows between systems, through support for various modes of interaction, e.g., MQ, API and file (canonical / industry standards such as NACHA, SWIFT, JSON, etc.)

- Introduced an API gateway to handle multiple payment types to enable channel agnostic payment capabilities

- Deployed a modular approach to support existing and new systems with isolation of core and product processors and avoid redundancies in capability builds

- Developed a React-based UI as the touchpoint for integrations between the payments hub and other systems

Outcomes

- A core payments engine capable of seamlessly integrating with multiple, complex systems

- Superior client experience, resulting from a holistic view spanning initiation, payment rails, and clearing

- A modernized payments platform that is ISO 20022-compliant and future-ready for processing and reporting needs

- Faster implementation of functionalities for payment processors

Our experts can help you find the right solutions to meet your needs.

Automated Scheduling Bots Boost Productivity by 50%

Client

Leading supply chain brokerage

Goal

Automate the manual, supply-chain scheduling process to improve staff productivity and customer satisfaction

Tools and Technologies

UI Path Orchestrator, UI Path Assistant, Microsoft Power BI, Office 365

Business Challenge

Performing crucial supply chain logistics, a provider’s operations team was struggling due to the high volume of scheduling appointments with shippers, receivers, and carriers, which involve back and forth emails, phone calls, or manual data entry into multiple Transport Management Systems (TMS).

These appointment-scheduling complexities vary based on the parties involved, from sending an email requesting appointment times to accessing a TMS and selecting what’s available as per their schedule.

Lacking proper analytics, sales representatives were unable to pinpoint peak appointment times, track cancellation rates, or discern customer preferences, often leading to shipment delays and incurred detention charges.

Solution

- Deployed multiple rule-based, automated workflows to pull information from incoming appointment requests (from emails, web forms, etc.) and automatically input it into the various TMS used to book pick-up and delivery appointments

- Developed a Power BI dashboard to visualize appointment trends, peak times, and cancellation rates, providing insights into customer behaviors, including frequent reschedules, preferred times, and typical lead times for booking appointments

- Delivered a reusable solution that could be leveraged for other business areas

Outcomes

- Bots operating 24/7 have led to over 15,000 monthly appointments being scheduled, resulting in a 50% reduction in manual scheduling hours

- The productivity of the operations team has improved by 50%, enabling staff to concentrate on high-value tasks rather than manual appointment-booking

- The increased accuracy in scheduled appointments has significantly decreased detention charges, thereby boosting overall customer satisfaction

Our experts can help you find the right solutions to meet your needs.

Automated POD improves turnaround time 95%

Client

Leading supply chain brokerage

Goal

Automate Proof of Delivery documentation process to increase efficiency and accuracy in data upload, validation and invoicing

Tools and Technologies

UI Path Orchestrator, UI Path Document Understanding, Microsoft Power BI, Oracle Transportation Management

Business Challenge

Proof of Delivery (POD) is a document that confirms an order has arrived at its destination and was successfully delivered before the invoice can be billed for payment.

Lack of an electronic POD system leads to inefficient, manual processing due to varied legal and contractual documentation requirements, resulting in longer billing cycles. Diverse formats and layouts from different carriers complicate data extraction from paper-based PODs.

Solution

- Developed a Document Processing Bot with UI Path AI Center, leveraging Document Understanding and Optical Character Recognition for managing various carrier documents

- Optimized data models for major carriers, focusing on the top five document types that represent 80% of the volume

- Implemented UI Path Action Center's "Human in the Loop" to handle exceptions and conducted 6-8 weeks of rigorous training on the Document Understanding model to ensure accuracy and meet confidence targets

Outcomes

- Achieved a 95% reduction in POD turnaround time, dropping from 48 hours to 2 hours, significantly boosting customer satisfaction

- Enhanced productivity by 87.5%, confirming receipt and condition of freight efficiently

- Reached 80% process accuracy, with continuous enhancement via automatic retraining

- Cut the billing cycle by 35%, allowing immediate use of data for customer invoicing

Our experts can help you find the right solutions to meet your needs.

Industries

Company